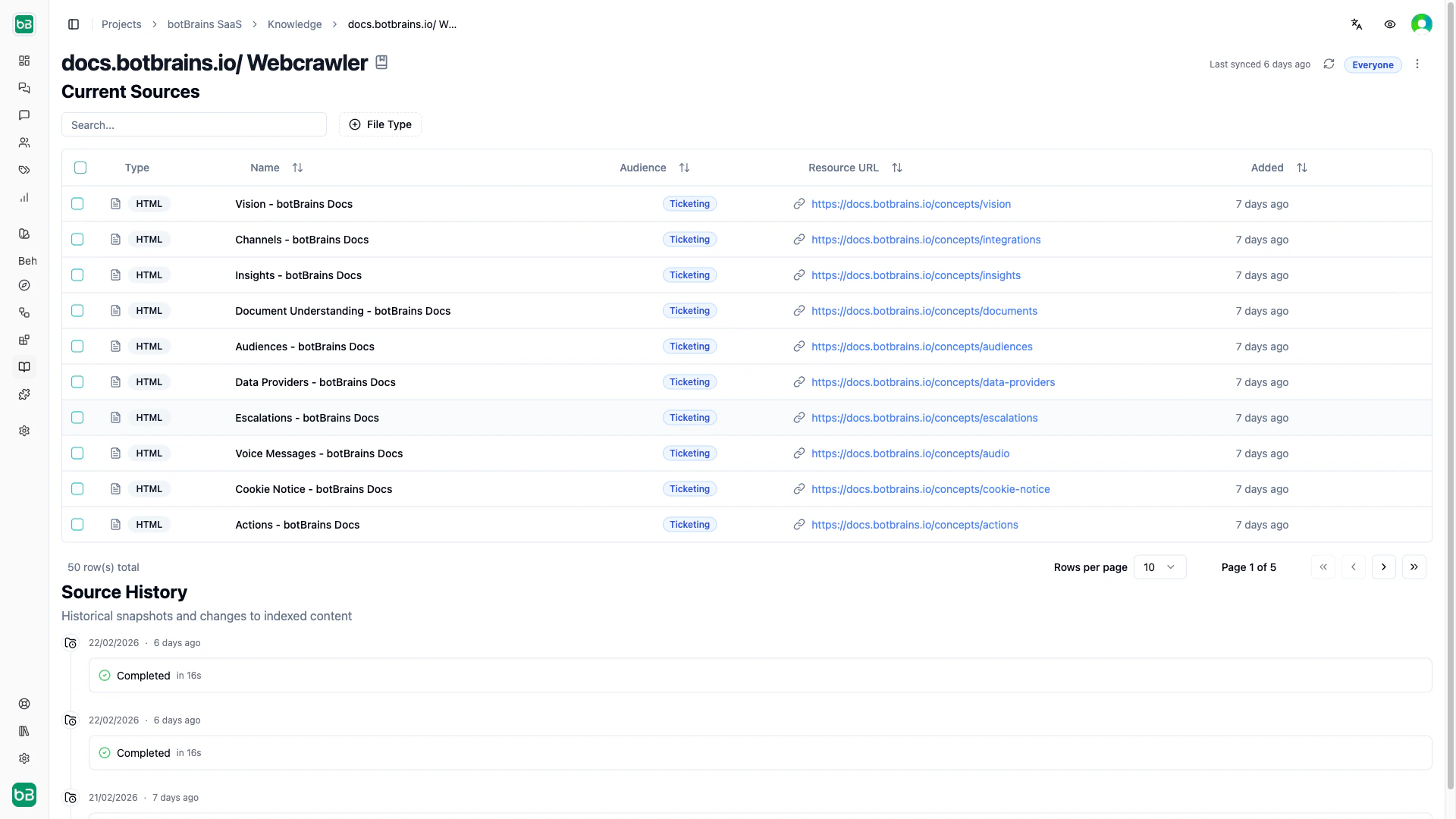

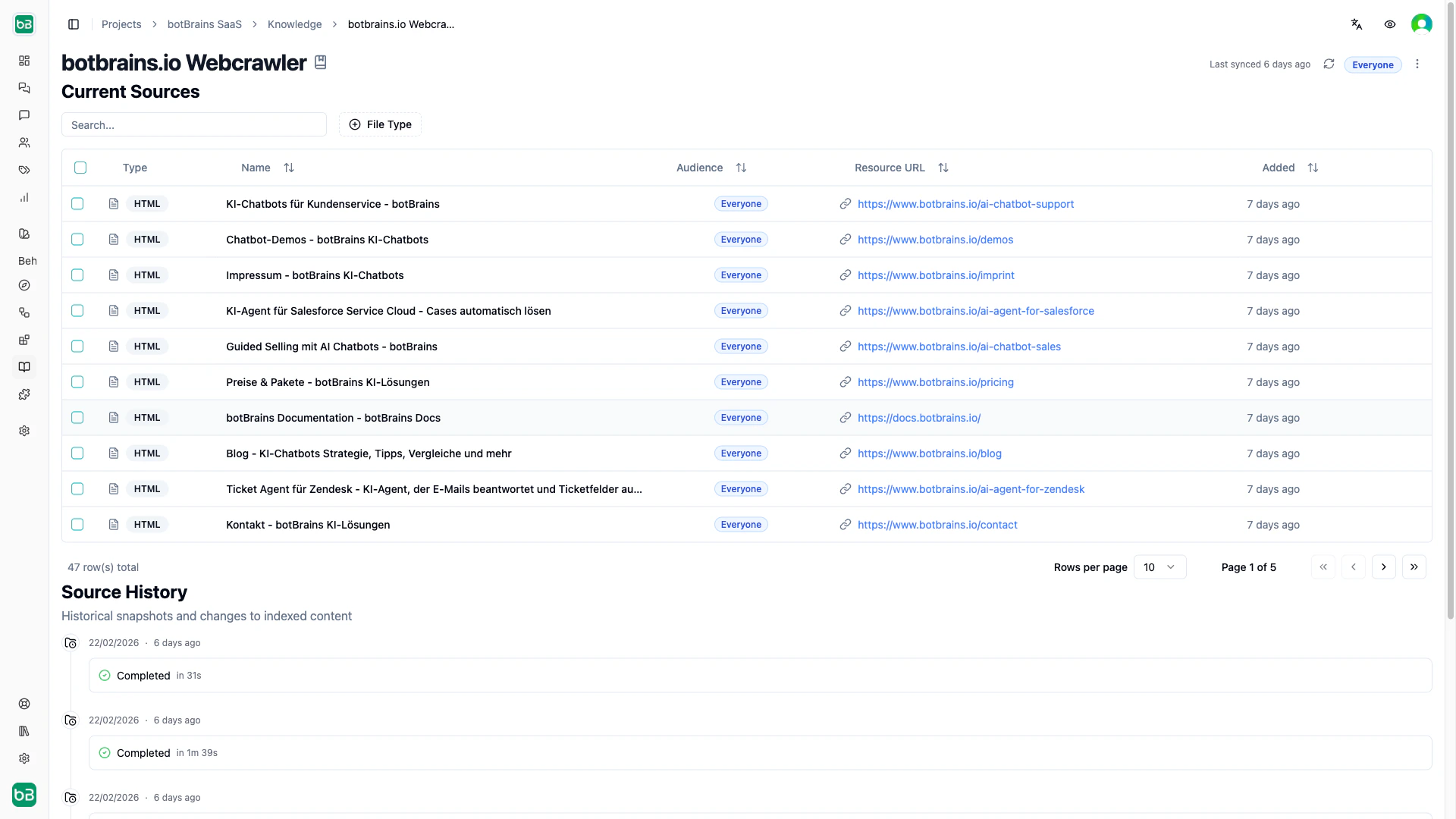

Data providers connect your AI to existing knowledge sources and keep them in sync. Each sync creates a snapshot, a point-in-time record of all discovered content. Compare snapshots to see what changed between syncs. You can assign an audience to a data provider so all new sources it discovers are automatically scoped to that segment.Documentation Index

Fetch the complete documentation index at: https://docs.botbrains.io/llms.txt

Use this file to discover all available pages before exploring further.

Web Crawler

Crawl websites, documentation sites, and help centers starting from one or more seed URLs.

Crawl Scope

| Scope | Allows | Blocks |

|---|---|---|

| Same Domain | All subdomains under the root domain | Other domains |

| Same hostname | Exact subdomain only | Other subdomains, other ports |

| Same Origin | Exact hostname, protocol, and port | Everything else |

Render Mode

| Mode | Use when |

|---|---|

| Automatic | Default. Decides per page whether to render JavaScript |

| JavaScript | Single-page apps and dynamic content |

| No JavaScript | Static sites (faster) |

URL Controls

- URL Limit. Maximum pages to crawl (1–20,000). Start small (50–100) to verify your config, then increase.

- Concurrency Limit. Simultaneous requests (1–50). Use lower values to avoid overloading the target site.

- Query Aware. Treat URLs with different query strings as separate pages.

- Fragment Aware. Treat URL fragments (#section) as separate pages.

Include/Exclude Filters

Use glob patterns to control which pages to crawl:Collections

Upload PDFs, Word, PPTX, Markdown, Text, Excel and close to every other common format you have information in. Because this cannot happen periodically (you can to manually upload files), this is best for static content that doesn’t change often. Examples include internal procedures, policy documents, or quick knowledge additions.